High Achievers, Laggards, Muddlers

By brucelynn

Tags: 70-20-10, Antonio Rodriguez, HP, Microsoft, Mini-Microsoft, performance reviews

Category: Uncategorized

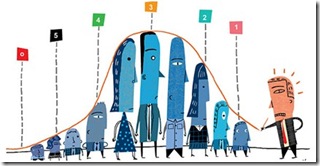

One of my earliest encounters with 70-20-10 came at my professional alma mater, Microsoft, whose Performance Rating system for ‘Contribution Rating’ (long term capability rather than just the recent year’s performance on the job) was based on a 70-20-10 bell curve of stack ranking…

· 70% – “Demonstrates potential at minimum to broaden in one’s role or to advance one career stage or level as a leader – either as a People Manager and/or individual contributor. Past performance suggests capability of delivering consistent and significant contributions over long-term. Competencies typically are at expected levels.”

· 20% – “Demonstrates potential to advance faster than average as a leader – either as a People Manager and/or individual contributor – preferably multiple levels or two career stages. Past performance suggests capability of delivering exceptional results over long-term. Competencies typically are at or above expected levels.”

· 10% – “Demonstrates limited potential to advance.”

In a bit more colourful terms, this general breakdown was paralleled by an HP executive, Antonio Rodriguez, who blogged on his own (slightly different distribution) ’20-20-60 rule’ which “outlines the mix of high achievers, laggards and muddlers in the middle at any large organisation.”

· ‘The Fat’ (60%) – “generally only good at producing and consuming meetings and at looking good in front of their bosses. They don’t take risks, but not because they are to limited (these are not Peter principle folks), but because they are optimizing for a different outcome: their own career advancement.”

· ‘A Players’ (20%) – “the real A players…They have the skills to get things done, the passion, and perhaps most importantly, the patience required to make elephants dance (big companies get stuff done). Every time I meet one of these gems, I walk away believing a little more in the human condition.”

· ‘Peter Principle’ (20%) – “operating above their skill/will level. These are the folks who make up the Peter Principle bucket— promoted beyond their abilities.”

Many debate the appropriateness of such ‘vitality curve’ classifications. In general, I applaud Microsoft’s culture and systems for being very strongly driven by objectives and outcomes. In a company that has traditionally had lots of rewards to give out, it has always tried diligently to create as much of a meritocracy as possible with processes to methodically and equitably measure out those rewards. A few years ago, the leadership even made a newsworthy and ambitious move to further reform the system based on employee feedback and assert less rigidity in following performance distribution curves.

My concern about the application of these ‘distributions’ is two fold. First, they are statistical constructs and most executives lack to numeracy to properly interpret and work with them. For example, while these distributions might generally apply over large numbers, no reasonable statistician would expect them to hold identically for smaller populations. In companies, sometimes these distributions are imposed on teams of just a handful of people. This imposition violates all sorts of statistical principles.

Also, one significant variable that such simplistic systems omit is the outcome of the group. A group that has achieved great things is likely to have different distribution of these categories than one that hasn’t. A world champion team might have more than ‘20%’ of its team as ‘All Star’ talent. In fact, the very fact of the skew to high performance could explain the overall team success. In another scenario, none of the team might be standouts (ie. no one at the 20% high achiever level), but the team work exceptional leading to a whole greater than the sum of the parts.

But more important than the clumsy application of such distributions is their application at all. These breakdowns are ‘descriptive’ stats, but misguided managers use them as ‘prescriptive’ rules. Misguided executives introduce policies which force managers to classify their people into such categories. The execs like the numbers because they are a nice, repeatable, McDonalds-like process which is easy to implement and understand on a superficial level. Unfortunately, it is often misapplied in specific instances. It is sort of like expecting a day with a ‘50% chance of rain’ to rain for half the day and be dry for half the day.

The managers defend the 70-20-10 system asserting one just has to ‘raise the bar’. That all performance is relative. If everyone has broken the world record in their heat, then only the top 3 get to go through anyway. In reality, business performance within an organisation is not so directly competitive nor finely measurable. Such a defence assumes a ‘purely’ competitive environment (ie. one’s performance is 100% independent of another’s), when in both reality and in preference, a large proportion of an individual’s time is spent supporting and collaborating with their ‘rival’ performer. Untangling the true relative performance here is a fool’s errand. As Mini-Microsoft described, "We still have a weird system that celebrates the individual devoid of the team. But now it’s not so much of a knife fight when you’re doing your best to defend your people."

While ‘70-20-10’ can be a useful benchmark against which to examine the distribution of performance assessments over large groups, it is inadvisable and misapplied maths to turn it into some sort of ‘rule’ by which to manage. One can look at large groups and even smaller groups and use it to flag questions, but then if there are good answers for the skew in distribution, then the matter of 70-20-10 should be dropped in favour of respecting a manager’s professional work as well as tailoring the performance review to the individual worker and their own individual context.

[…] not alone in feeling like this, muddling along like all the other workers who must be the 70% ers that Bruce talks about on his blog who feel like a fraud from time to […]

[…] Microsoft isn’t the only technology megalith which uses the 70-20-10 rule. Rival Google also uses it to describe the allocation of their investment in innovation. CNN Money explored the concept in its interview with CEO Eric Schmidt called ‘The 70 Percent Solution’ (thank Richard). […]

[…] Once a year, during our team offsite, the managers would take the time to explain this system, all the while taking great pains to explain why it was a good thing, in that sort of “toxic sludge is good for you” way. There are all sorts of problems with this sort of system, two of which were pointed out quite astutely by Bruce Lynn in the 70-20-10 blog: […]

[…] not alone in feeling like this, muddling along like all the other workers who must be the 70% ers that Bruce talks about on his blog who feel like a fraud from time to […]